|

Perhaps

the newest, most commonly discussed and advertised technology in

machine vision in recent times is Embedded Vision. Perhaps

the newest, most commonly discussed and advertised technology in

machine vision in recent times is Embedded Vision.

But what is it actually? And why has it become so prevalent suddenly?

The easiest way to answer this is to go back in time.

From the beginning

Historically, machine vision systems have consisted primarily of

a camera connected to a computer via some form of analog or digital

interface.

Initially, the machine vision industry used CCTV camera technology

for image sensing. Computers were fitted with analog frame grabbers

that supported cameras with PAL, NTSC and any of the other CCTV

formats. The image was captured by the frame grabber, transferred

to the PC’s memory and there, the image was processed by software.

The software might perform a number of different taks: enhance the

image, analyse and identify features in the image, make measurements

on those features to produce an inspection result.

Then came the progressive scan digital cameras that used the LVDS

interface, then Firewire cameras that harnessed the IEEE1394 interface

that was being built into some computer motherboards. Later USB2

was another ubiquitous PC interface that was leveraged by the machine

vision industry to connect to cameras. Then along the way, as resolutions

and speeds increased, we saw others emerging; Cameralink, Cameralink

HS, Gigabit Ethernet, Coaxpress and the different revisions of these

as they developed further.

Digital machine vision cameras over the years have become progressively

more sophisticated with in-built functionality to process and improve

the quality of the image. This removed the burden from the PC software

and enabled the complete system to run faster. Features like defect

pixel correction, flat field correction, colour conversion, gamma,

multiple regions-of-interest and many others added value to the

camera but inherently also increased its cost.

Regardless of the camera technology or the interface used, the humble

PC has been the lynchpin of machine vision systems for a long time.

Intel processors have featured heavily and with all its warts and

short-comings, Windows has been predominantly used as the operating

system.

In more recent years, we have also seen the advent of smart

cameras where the image processing, analysis and measurement

is done within the camera. Several companies like Teledyne

Dalsa , LMI and others

offer smart cameras with CPU’s, FPGA’s and GPU’s

to accelerate processing. They typically have an in-built user interface

for the operator to interact with the camera to setup the inspection

and measurement task. Although these cameras are quite powerful

and configurable, they are not flexible enough to perform an extremely

wide set of tasks, and they come at a cost.

Where embedded vision is today

So here we are today still relying on the PC, Windows or on smart

cameras - and this is where embedded vision steps in.

Due to the relatively recent emergence of very powerful, low-cost,

and energy-efficient processors, it has become possible to incorporate

practical computer vision capabilities into embedded systems, mobile

devices and the cloud. It’s expected that over the next few

years, there will be a rapid proliferation of embedded vision technology

into many kinds of systems.

Where embedded vision will be in the

future

But other than the low cost of these powerful new processors, what

else is driving the market to take-up embedded vision technology?

The influence of IoT and Industry 4.0

I believe that IoT and Industry 4.0 are contributing factors as

the ability for embedded vision to distribute the processing to

the image source fits well with IoT and Industry 4.0. Rather than

having multiple image sensors pushing high bandwidth data back into

a central powerful PC, embedded vision allows for image processing,

analysis and measurement at the sensor and so outputs lower bandwidth

data that can then be transferred to the cloud.

Autonomous vehicle technology

Technological advances and the transformation from driven to autonomous

vehicle technology is another driver of embedded vision. Autonomous

vehicles have a requirement for a multitude of image sensors to

provide the necessary feedback for guidance and safety and there

is a great deal of development currently happening in this area.

The machine vision industry has been very good at piggy-backing

on advances in technology made in larger industries and this is

another example.

Portability and Flexibility

Another driver of embedded vision, is the ability of manufacturers

to build cameras with different levels of sophistication, offering

greater flexibility and cost savings.

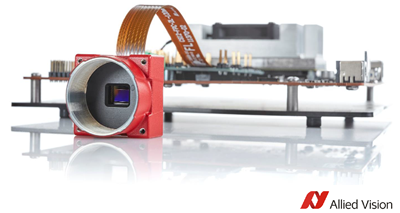

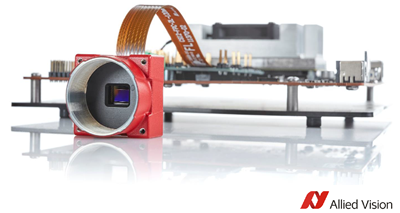

An example is Allied Vision’s Alvium camera series, one of

which is presented in the image above. This camera range offers

the very simple low-cost board-level Alvium 1500 cameras that do

only very basic on-board processing and then connect to a low-cost

embedded processor card to do the rest. They use MIPI-CSI2 to connect

the camera to the embedded processor with a standard video interface

for embedded vision. Where the application requires more processing

on the camera, the Alvium 1800 or 2000 series offer a mid-level

solution where some of the image processing is done on the camera

and the rest done on the embedded processor card. The Alvium 3000

series will do advanced on-camera processing. The Alvium 3000 cameras

are much lke the fully functioned cameras we have today with USB3

and GigE outputs.

This modularity offers a good platform to move across

as a developer’s needs change as it allows the developer to

design-in a camera that best fits cost and functionality requirements.

Which Embedded Processors

So what are these low-cost, embedded processors we talk about?

To be honest, low-cost is not always the case. There are some embedded

processor cards that cost as much as a PC but they typically offer

very significant performance benefits – for example up to

10x the graphics capability of a PC.

Some of the more common embedded processors being used are the i.MX

family from NXP which feature the ARM Cortex CPUs and run Linux.

The Jetson TX series is another ARM Linux solution along with the

Jetson Xavier with ARM and lots of nVidia GPUs.

The Embedded vision market is I believe still in a state of flux

as camera manufacturers try to establish their technology as the

mainstream. I think the takeaways right now are that machine vision

is heading towards a more distributed processing form, ARM and Linux

will gather momentum and cost will be at the forefront of most selection

decisions.

Marc Fimeri

Managing Director

Adept Turnkey Pty Ltd

|

Perhaps

the newest, most commonly discussed and advertised technology in

machine vision in recent times is Embedded Vision.

Perhaps

the newest, most commonly discussed and advertised technology in

machine vision in recent times is Embedded Vision.